A/B Testing Agency

A/B testing with statistical rigour, not gut calls.

We calculate sample size before launch and do not peek at results midway. Most agencies do neither, and call winners on 50 visitors.

- Pre-launch sample size + duration calc

- No mid-test peeking, no false winners

- Server-side variants in Liquid or React PRs

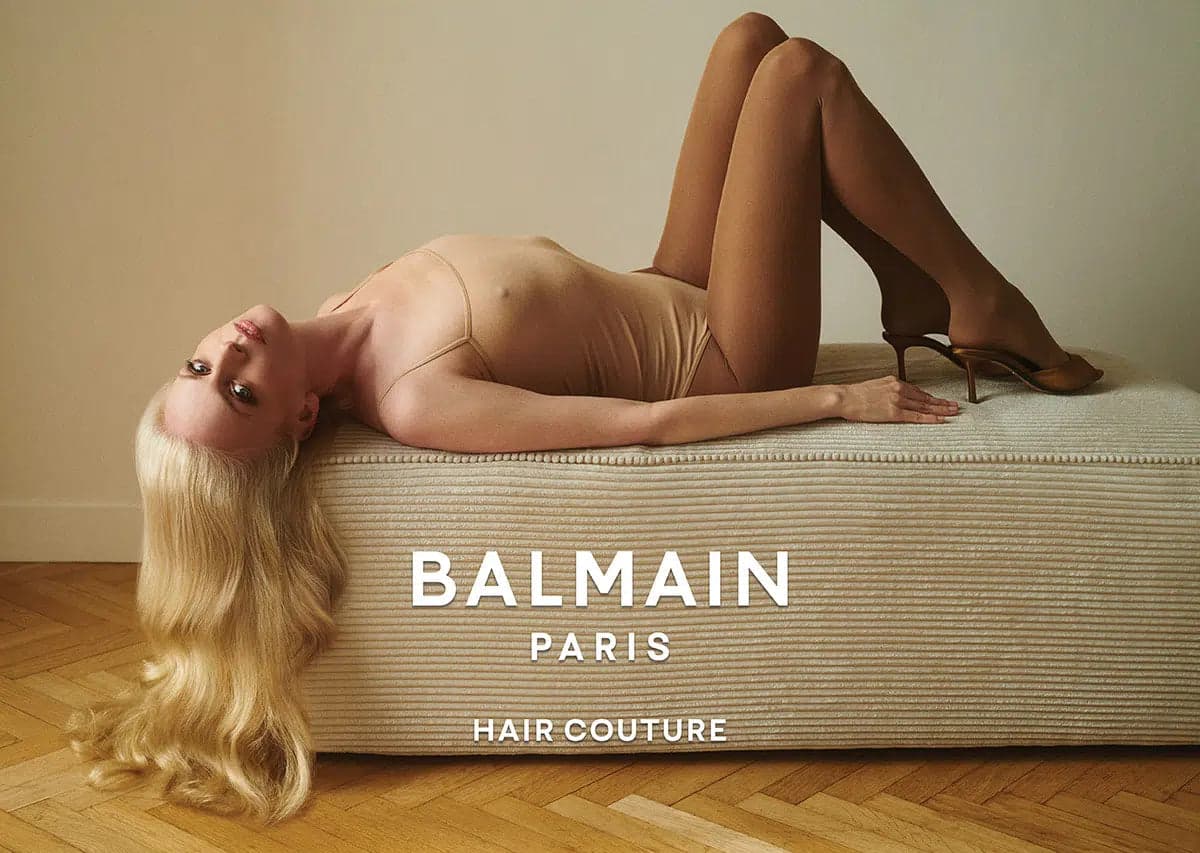

Trusted by ambitious brands worldwide

SEMrush agency

Rated #1 SEO agency in The Netherlands by SEMrush

100% Dedicated to SEO

SEO is all we do, and we're good at it

Most agencies call A/B test winners on 50 visitors.And then wonder why the lift never shows up in revenue.We refuse to do that.

Most agencies call A/B test winners on 50 visitors. And then wonder why the lift never shows up in revenue. We refuse to do that.

An A/B test only earns its name when the sample size, the run window, the success metric and the segment cuts are agreed before the test goes live. We run testing programs for ecommerce, B2B SaaS and lead-gen sites where every single experiment has a one-page test plan signed off before code is written. Industry average win rate is 1 in 8. Anything materially higher than that is either peeking, p-hacking, or both.

See our hypothesis libraryHow an A/B testing engagement runs

How a LASEO A/B testing program runs

Five distinct phases per test, repeated across a rolling quarterly backlog. Every test starts with a one-page plan signed off by your team and ends with a winner implementation PR or a documented loser archived in the hypothesis library.

- 01

Hypothesis library + impact-to-effort scoring

We maintain a shared Notion hypothesis library per client. Every proposed test is scored on impact (predicted lift in %), effort (engineering hours) and confidence (evidence from analytics, user research or competitor patterns). Each quarter the highest-scoring 8 to 12 hypotheses promote to the active backlog. The library is searchable across all past tests, so you never re-test the same idea.

- 02

Pre-launch sample size + duration calculation

For each promoted hypothesis we run the Optimizely or VWO sample size calculator. Inputs: baseline conversion rate from GA4, target minimum detectable effect, 95% confidence, 80% power. Output: required visitors per variant and the run window. If the page does not get enough traffic to hit the sample inside 6 weeks, the test is rejected or scoped to a higher-traffic surface.

- 03

Server-side or client-side variant build

Default: server-side via GrowthBook or Statsig with feature flags read at the edge or server render. For Shopify Plus, server-side variants live in Liquid behind a feature flag. Client-side via VWO or Convert is reserved for headline or copy tests on low-stakes surfaces where flicker risk is acceptable. Every test ships with GA4 variant-exposure custom dimensions wired up before launch.

- 04

Test execution + strict no-peek monitoring

Once a test is live, the only people checking results are senior consultants verifying that the test is collecting variant exposure correctly. No statistical reads, no mid-test screenshots to the client, no peeking. Unless sequential testing with alpha spending was agreed in the test plan, we wait for the calculated sample size before any conclusion is drawn.

- 05

Winner implementation PR + post-launch monitoring

When the calculated sample is reached and the result is significant at the planned threshold, we produce a one-page test result PDF with full statistical analysis (lift %, confidence interval, segment-level reads). The winning variant ships as a Liquid or React PR into your production repo. Losing variants are archived in the hypothesis library with reasoning, so the same idea is not retried blind.

Hypothesis library,power calc, no peeking.

Our testing engagements run on a hypothesis library scored by impact-to-effort, pre-launch sample size and duration calculated in Optimizely or VWO, server-side variant builds where possible, and a strict no-peek rule until the planned visitor count is reached. Winners ship as Liquid or React PRs into your production repo, not as ongoing VWO overlays paying licence fees forever.

What A/B testing actually means

A/B testing, explained without the dashboard theatre

When you commission an A/B test you are buying statistical evidence that one variant outperforms another on a specific metric, in a specific segment, with a specific margin of error. Not a screenshot of VWO saying variant B is winning by 12% on day three. The discipline is closer to clinical trial design than to marketing experimentation.

Sample size vs. duration

Sample size is the visitor count per variant you need to detect a given effect at 95% confidence and 80% power. Duration is the calendar window that count requires given your traffic. You need both. A 2-week minimum exists to cover a full business cycle, but if your sample is not hit in 2 weeks you keep running. Calling the test at exactly 14 days regardless of sample is one of the most common mistakes.

Server-side vs. client-side testing

Server-side variants render the variant HTML before the page reaches the browser. No flicker, no SEO risk, Googlebot sees a stable page. Client-side tools like VWO and Optimizely Web rewrite the DOM after first paint, causing visible flicker and hiding variant content from crawlers. For anything visible above the fold or anything that affects indexable content, server-side wins.

Frequentist vs. multi-armed bandit

Frequentist A/B testing (the default) is the right tool when you want a clear win or loss with calibrated false positive rates. Multi-armed bandit allocation is the right tool for ongoing CRO on surfaces where exploration cost is high and you want traffic to drift toward the winner as evidence accumulates. We use bandits for headline rotation and product recommendations, not for high-stakes redesign tests.

Segment-level reads

An overall flat A/B test result often hides a strong positive effect in one segment (mobile, returning visitors, paid traffic) cancelling a negative effect in another. We pre-register the segment cuts in the test plan so segment-level reads are confirmatory, not p-hacked after the fact. Common cuts: device, traffic source, new vs. returning, geo, customer cohort.

Win rate as honesty signal

Industry average A/B test win rate is roughly 1 in 8 (around 13%). Anything materially higher reported by an agency is a flag: peeking, p-hacking, declaring partial wins as wins, or testing tiny changes designed to never lose. Our reported win rate sits in the 12-18% band depending on client maturity, which we treat as a feature, not a bug.

Multivariate testing reality

Multivariate testing (testing several elements simultaneously) requires roughly N times the sample size of an A/B test for N variant combinations. On most ecommerce sites you simply do not have the traffic. We default to sequential A/B tests and only run multivariate when the surface is a homepage with millions of visitors per month and the design team needs interaction effects.

.svg?dpl=dpl_CWQqtYRxoJHqsNZT6bRHrFxDdrec)

.svg?dpl=dpl_CWQqtYRxoJHqsNZT6bRHrFxDdrec)

.svg?dpl=dpl_CWQqtYRxoJHqsNZT6bRHrFxDdrec)

.svg?dpl=dpl_CWQqtYRxoJHqsNZT6bRHrFxDdrec)

Why LASEO vs alternatives

A/B testing compared honestly

Generalist CRO agencies, VWO-only setups and in-house experimentation teams all have legitimate use cases. Here is where LASEO fits.

Peeks early, calls winners on 50 visitors

- Test starts, results checked daily, winner called on day three when the dashboard turns green at 200 visitors per variant.

- Mid-test screenshots sent weekly to the client. Tests stopped early when results look encouraging. False positive rate well above the nominal 5%.

- Everything built client-side in VWO or Optimizely Web. Visible flicker on first paint. Variant HTML hidden from Googlebot, SEO risk on indexable content.

- 70 to 90% reported win rate, achieved through peeking, p-hacking, declaring partial wins, and testing tiny changes designed never to lose.

- Winning variant left running indefinitely inside VWO. Client keeps paying licence fees on variants that should just be the default. Tool dependency grows over time.

- Wins remembered, losses quietly forgotten. Same hypothesis re-tested two years later by a new account manager who did not know it had failed before.

Pre-registered, fully powered, no peeking

- Sample size calculated in Optimizely or VWO at 95% confidence and 80% power before launch. Test runs until the calculated visitor count is reached. No exceptions outside pre-agreed sequential plans.

- Zero peeking. Internal verification only that variant exposure is collecting correctly. Reads happen at the planned sample, not before.

- Server-side default via GrowthBook, Statsig or custom feature flags. Liquid for Shopify Plus, React for headless. Client-side reserved for low-stakes copy tests on non-indexable surfaces.

- 12 to 18% win rate reported honestly. Industry average is roughly 1 in 8 and we treat that as a benchmark, not a target to beat through statistical sleight of hand.

- Winning variant ships as a Liquid or React PR into your production repo. The feature flag is removed. The variant becomes the default. No licence fees on permanent winners.

- Shared Notion hypothesis library logs every test, win or lose, with the test plan, result PDF and reasoning. Searchable across the full client history. Losers stay archived.

A/B testing engagements at scale

Testing programs that paid back the retainer many times over

What our testing clients say

A/B testing in their own words

Our previous agency had a 78% win rate. LASEO sits at 14%. Sounds worse until you realise the previous wins never showed up in revenue. The LASEO winners do. Last quarter the new checkout variant lifted completed orders by 6.2% with a 95% confidence interval of 3.1 to 9.3%, and the revenue uplift held in the next month.

They were the first agency that refused to peek when our COO asked for a mid-test screenshot. We tested the new pricing page for 28 days, got a 4.8% lift on trial starts with a 95% CI of 1.9 to 7.7%, and the result held when we shipped it to 100%. That kind of discipline is rare.

We moved from client-side VWO to server-side GrowthBook with LASEO. Flicker on the homepage hero is gone, our Core Web Vitals LCP dropped from 2.8s to 1.9s, and the same A/B program is now indexable. The redesigned category page variant won at 11.3% lift with a CI of 7.2 to 15.4% over 6 weeks.

Our previous agency had a 78% win rate. LASEO sits at 14%. Sounds worse until you realise the previous wins never showed up in revenue. The LASEO winners do. Last quarter the new checkout variant lifted completed orders by 6.2% with a 95% confidence interval of 3.1 to 9.3%, and the revenue uplift held in the next month.

They were the first agency that refused to peek when our COO asked for a mid-test screenshot. We tested the new pricing page for 28 days, got a 4.8% lift on trial starts with a 95% CI of 1.9 to 7.7%, and the result held when we shipped it to 100%. That kind of discipline is rare.

We moved from client-side VWO to server-side GrowthBook with LASEO. Flicker on the homepage hero is gone, our Core Web Vitals LCP dropped from 2.8s to 1.9s, and the same A/B program is now indexable. The redesigned category page variant won at 11.3% lift with a CI of 7.2 to 15.4% over 6 weeks.

Honest answers about A/B testing

What buyers actually ask before commissioning an A/B testing program.

An A/B test should run until your pre-calculated sample size is reached, with an absolute minimum of two weeks plus one full business cycle. Two weeks covers weekly variation in traffic and behaviour, and the business cycle covers monthly variation (payday, billing cycles, weekend vs weekday patterns). If your traffic is low and the calculator says you need 6 weeks to hit sample, you run 6 weeks. Calling a test at exactly 14 days regardless of sample size is one of the most common reasons CRO wins do not stick: the test was underpowered and the apparent lift was statistical noise.

Test plan review

Bring an A/B testto a senior consultant.

30 minutes with a LASEO senior consultant. Send us your last three test plans, your current dashboard, or a screenshot of a test you are unsure about. We will tell you whether the sample size, segment cuts and statistical method hold up.